AppSheet is a google product that enables you to use Google Sheets as a backend database for a mobile app that works in both the Android and Apple ecosystems. I encourage you to check it out, as it’s become a go-to tool for me to think about interacting with students and my colleagues at my school. Quick note: it’s really designed to be used by people on the same domain (yourcoolschool.edu, for example), not really to produce a true mobile app for the world. This post is about some brainstorming I’ve been doing to think about a campus app that could be useful.

What I’ve done so far

Here’s a quick list of the apps I’ve built and some of the cool features they make use of:

- Hamline Go

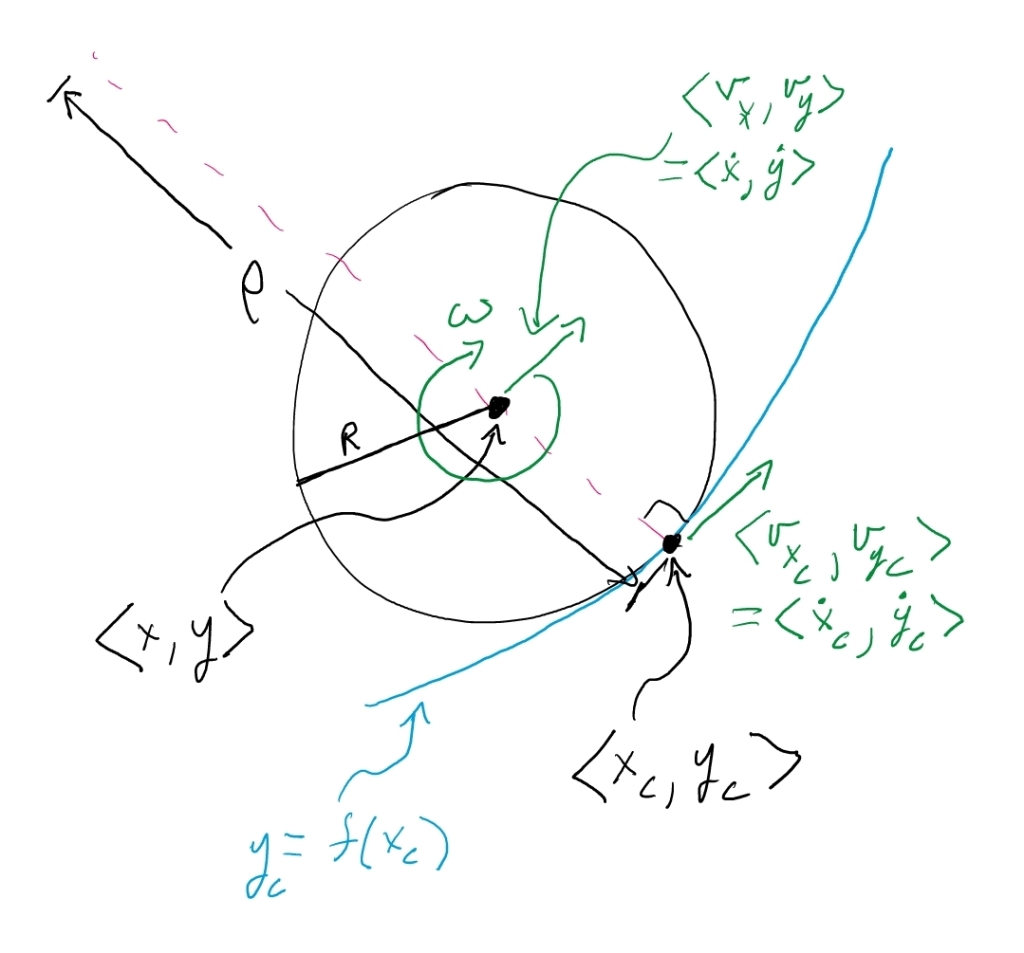

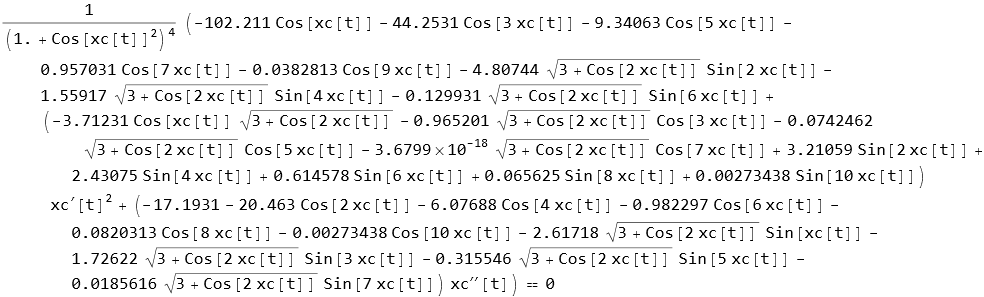

- This is an app I built to try to help build community on campus. Students can see faculty and staff avatars on a map and collect them by clicking on them. They get one point for every collection unless its a faculty member who teaches in their major, then it’s 5 points. If instead they actually talk to a faculty or staff member and get their hourly code, they get 10 points. Certain offices on campus also have hourly codes. I also built in flash (1 hour), daily, and weekly challenges like “write a haiku about your major” or “prove you found this spot on campus”. Point leaders after the end of each month get declining balance (money) prizes.

- Tools used:

- Google Maps

- Login features (checks user email and crosschecks the faculty in their declared major)

- Editable images (you can draw on images before submitting them for the challenges)

- Google Apps Script running independently to update both the avatar locations (randomized on our campus) and the hourly codes

- Majors t-shirts

- Every major program was encouraged to design a tshirt that we would then order for any declared major that wanted one. I built an app to show students the designs available to them (only the designs for their declared majors) and let them submit the size that they wanted.

- Tools used:

- Login (check their major and crosscheck against the submitted designs)

- Selfie collection (students are encouraged to submit a selfie wearing their tshirts so we can make a collage)

- Scavenger hunt

- I made an app that encourages first year students to learn about the various offices on campus. Each campus had a QR code displayed that, if the students scanned it using the app, indicated that the student had visited that office

- Tools used

- QR code reader

- Login

I’ve made it quite a bit up the learning curve, including some of these really useful tips and tricks:

- Make a slice of the users data called “me”. Have it be a filter of all the users that match the useremail() (a built in function). Essentially it’s a data source with just one row in it. Then I use index(me[id], 1) to get the current user’s id when doing a lot of the crosschecking mentioned above. I do the same thing on any of the related collections. In Hamline Go, for example, I make a “my avatars” slice to show the avatars that have been collected by that user. Then I can send that slice as the core data to a “view” that shows avatars.

- Use an automation to send a notification whenever there’s a new flash challenge. They only last an hour, so it’s helpful to let the students know there’s a new one. These notifications come as push notifications to the students phones.

- Pre-fill forms using LinkToForm that can take a collection of values to pre-fill elements. You can also then not show those elements in the form view and so it looks magical!

- Send email notifications to people that include live forms right in gmail. This works really great for approvals.

What I’m hoping to do

I’d love to create an app that I’m currently calling “Hamline All Around”. Here are some of the features I hope to build in:

- Somehow provide a navigation aid through our various systems. We get a lot of feedback from students who say they just don’t understand how to accomplish various things, especially the ones that are supposed to be providing valuable resources to them. A great example is our emergency grant program.

- One thing I’m thinking of is a dynamic FAQ that might ask what sort of thing they’d like to accomplish and then quickly get them to the office they should start with.

- “Find a study buddy!” I gather other schools do this and so I built a quick test to see if this would work. The student logs in and it shows them the classes they’re taking. For each they can “raise their hand.” If they do, they see all the other students who’ve done the same, all of whom are indicating that they’re interested in finding study buddies. They can see each other’s emails, or potentially use the app’s push notification system to communicate. I also made a form that lets them say “I plan to study tomorrow night in the library from 7-8” and the others who’ve raised their hands can indicate if they plan to come. This is displayed in a nice calendar format that looks a lot like google calendar.

- Show the student group and athletic team calendar events in one place (right now you have to separately subscribe to both ical streams, but appsheet can do the subscription and display them in the app)

- Event check in: All employees and students have bar codes on the back of their ids and appsheet can read them (it’s a setting for one of the built-in form elements). It would be very easy to build in an event check-in system where the event runners (multiple of them) could have the app running on their phones and they could scan in the students/staff/faculty who attend. Once they do, the app on the students/staff/faculty phones could become much more functional (ie the app, upon being updated, would detect that the person is checked in and would then show all the relevant functionality for the event).

- Directions: Often it’s not enough to tell people what building things are in. Directions for how to actually get to the office can come in handy. I’ve been wondering about vids showing someone literally walking from a common place to an office, all the while describing what useful things the office can do for students.

Help!

I’m excited to work on this, but I’d love some more brainstorming, including poking some of these ideas a little. Here are some starters for you:

- I love this. What I especially love is . . .

- This is dumb. What I especially dislike is . . .

- Wait, you’re tracking the location of all faculty and staff in real time?! (note: it took me a long time to convince people I wasn’t doing that. I call the things avatars and try to make it clear that I just randomly place them on the campus map. However, several faculty and staff were deeply suspicious of this app and asked to be taken out of the app.)

- I think the “find a study buddy” app is really interesting. Have you thought about . . .

- I think the “find a study buddy” app is really disturbing. Instead you should . . .

- So after you figure out all the views and the data connections you have to learn how to write it for both the Android and iOS systems?! (no! Appsheet does all that for me. All the students have to install is Appsheet which already exists in both ecosystems)

- We’ve got a student app on our campus and I love it. Here are my favorite parts . . .

- We’ve got a student app on our campus and I hate it. What would make it so much better is . . .

- Here’s some additional functionality you should think of . . .

- Can you please do some tutorials on how to do all this in Appsheet?

- What’s an example of a haiku about a major?

- Minnesota Law

- Ins and Outs of Policing

- Criminal Justice